Benchmarks

Benchmarks

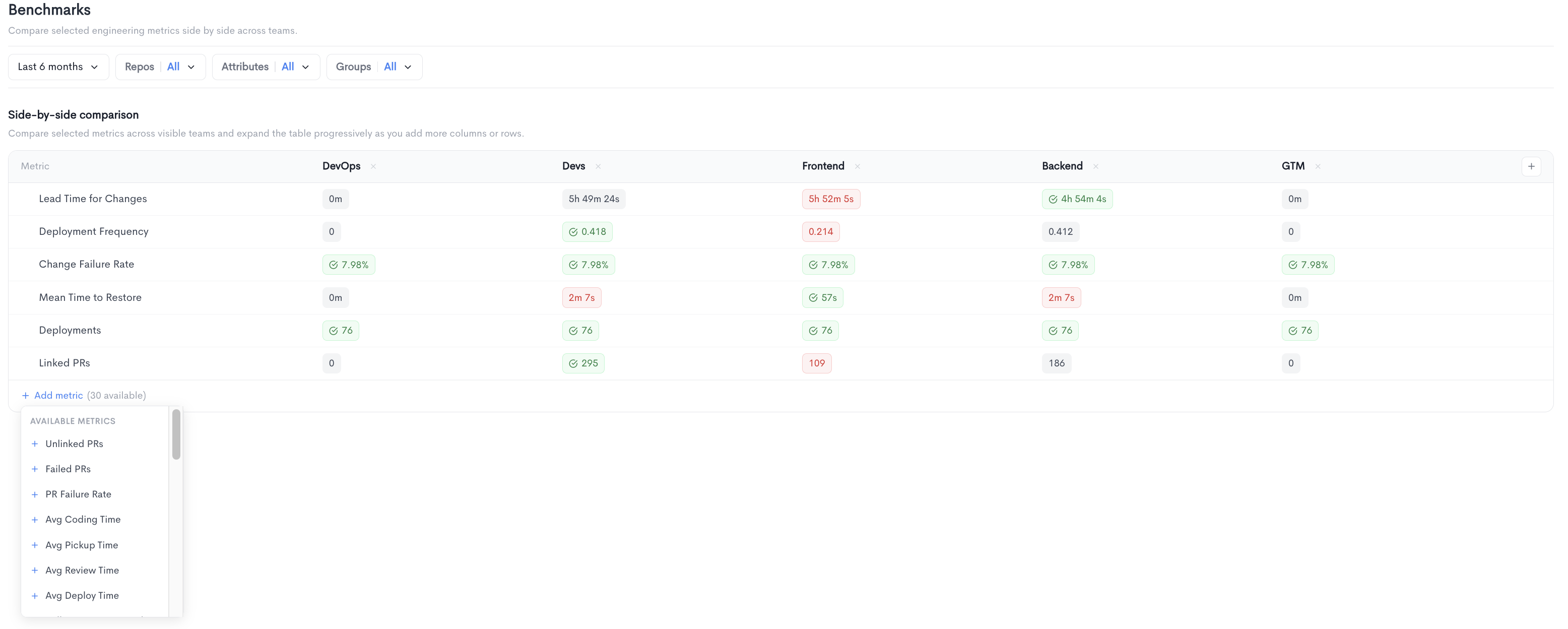

The Benchmarks report allows you to compare selected engineering metrics side by side across teams. It is accessible under Health in the left sidebar.

The report displays a Side-by-side comparison table where each column represents a team and each row represents a metric. Values are color-coded to highlight performance differences across teams, making it easy to identify outliers at a glance. New teams can be added to the comparison using the + button at the end of the column headers.

The following metrics are displayed by default:

- Lead Time for Changes - The median time from first commit to production deployment.

- Deployment Frequency - How often code is deployed to production.

- Change Failure Rate - The percentage of deployments that result in a failure.

- Mean Time to Restore - The average time to recover from a production incident.

- Deployments - The total number of deployments in the selected period.

- Linked PRs - The number of pull requests linked to a ticket or issue.

Additional metrics can be added to the table using the + Add metric button. There are 30 available metrics in total, including:

- Unlinked PRs, Failed PRs, PR Failure Rate

- Avg Coding Time, Avg Pickup Time, Avg Review Time, Avg Deploy Time

- Pull Requests Created, PRs Closed, PRs Merged

- Reviewed PRs, Unreviewed PRs, Rubber-Stamped PRs

- PR Traceability (%), Lines Added, Lines Deleted

- Avg Time to Merge, Issues Created, Bugs Created

- Avg Issue Cycle Time, Active Issues, Issues Resolved

- Stuck Issues, Issues Touched, Story Points Completed

- Avg Story Points, Commits Created, Issues per PR

- Knowledge Sharing Index, Active Days

Filters available at the top of the report include date range, Repos, Attributes, and Groups.

Updated about 2 months ago